Entropy

No physical system is entirely efficient. Weirdly enough, nobody seems to have named this principle until the 19th century, when physicists coined the term “entropy” to mean roughly “wasted energy,” and declared the second law of thermodynamics: Time flows, almost by definition, in the direction of increasing entropy.

In the 1940s, Claude Shannon had the brilliant insight that information is similar to energy in this regard. No real communication channel is perfect. The receiver of a message can never be absolutely sure that the message wasn’t garbled in transmission. Shannon borrowed the term “entropy” to mean “uncertainty,” especially the kind introduced by noisy communication channels. According to Wikipedia, the mathematical formulas for Shannon entropy and thermodynamic entropy are “formally identical.” (Caveat lector: I’m neither a mathematician nor a physicist.)

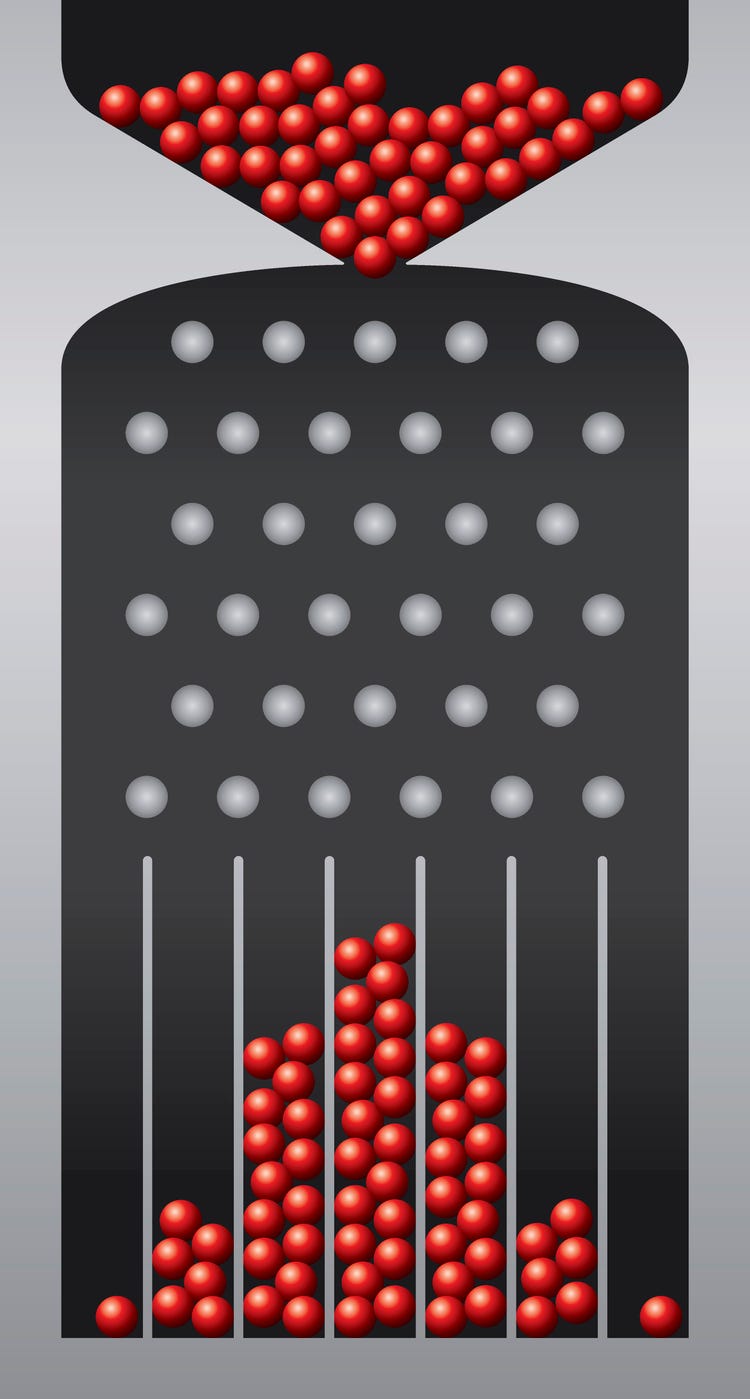

In the vernacular, entropy is often used to mean randomness, but that’s not quite right. Random describes a process, such as flipping a coin; whereas entropy describes a state that random processes tend to produce. For example, if you flip a coin 100 times, getting 50 heads and 50 tails (or thereabouts) is a high entropy state. Lots of different sequences could produce 50 heads and 50 tails. In contrast, 100 heads and 0 tails can be reached in only one way, and we associate this low entropy with non-randomness—meaning, the coin flips probably aren’t random. Of course, if you repeated the experiment enough times, you would eventually produce 100 consecutive heads, but that wouldn’t mean the coin flips were non-random. Likewise, a coin you could somehow control remotely might be programmed to produce 50 heads and 50 tails. Entropy isn’t randomness.

So, what’s the relevance of all these forms of entropy to the day-to-day lives of engineering teams? In the absence of external influence, systems tend toward reachable states. We discussed the similar appearances of randomness and efficiency in Method or Madness?, but we didn’t talk about how you could leverage that randomness to achieve specific goals.

If you want people to behave in particular ways, make the right things easy and the wrong ones hard. The former is, perhaps surprisingly, more important than the latter, because people’s default behavior is neither inert nor malicious. They’re going to do something, and that something will be whatever seems worth the effort. For example, if you want to increase code coverage, you’re better off making it easy to write and run tests than you are blocking commits that lack sufficient coverage. Almost anything you do to block behavior that you merely consider suboptimal in the abstract, rather than outright wrong, is counter-productive. Trust people’s ad hoc judgement, and make it easy for them to make the right call.

Similarly, if you’re designing an API, make it easy to call the API correctly and hard to call it wrong. Choose clear, concise names for functions and parameters; and if those seem insufficient, write accessible documentation. Declare related functions near each other. If parameter order matters, give consecutive parameters different static types.

No matter what your goals are, they’re probably reachable in many different ways. Achieving them does not require you to eliminate all those quasi-random processes that may affect your outcome, but are mostly beyond your control. Instead, try to guide those processes ever so slightly in the right direction. When success is your system’s most easily reachable state, entropy becomes your ally.